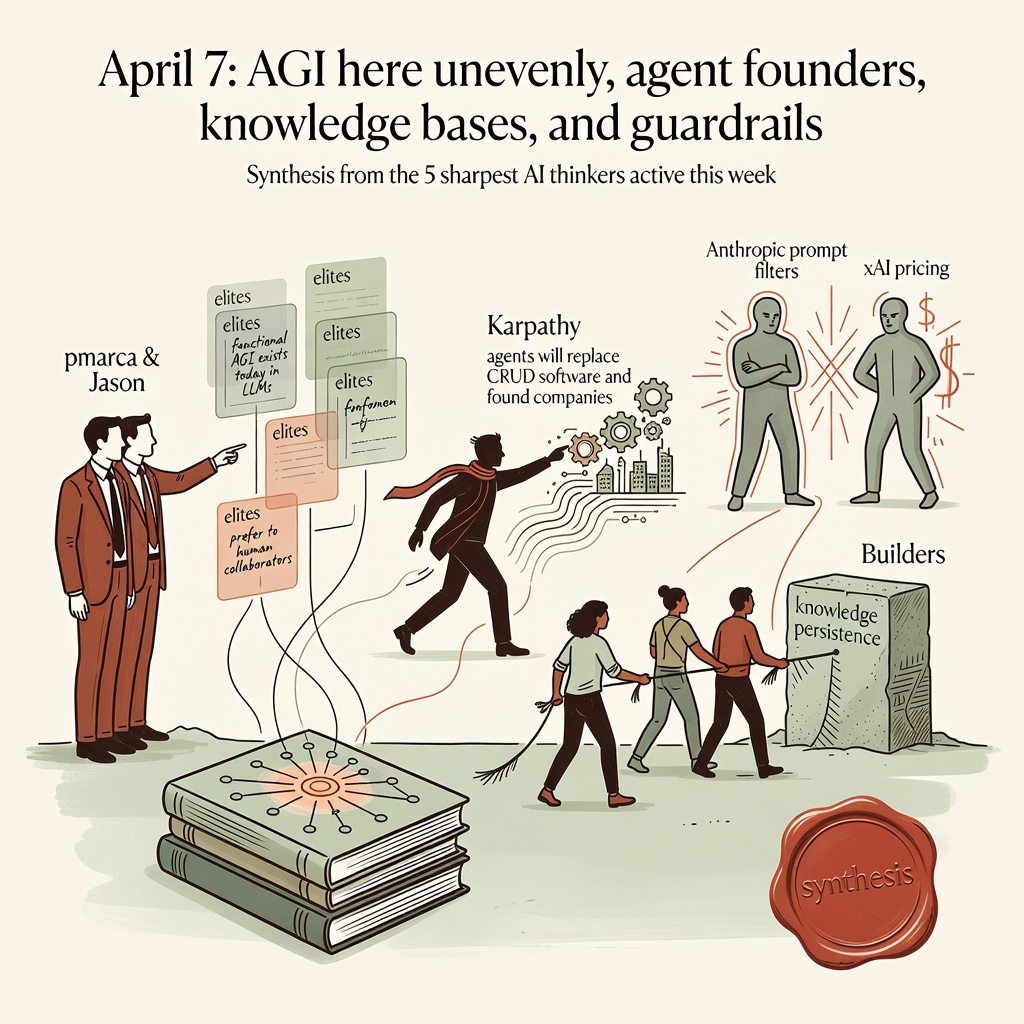

April 7: AGI here unevenly, agent founders, knowledge bases, and guardrails

pmarca and Jason declare functional AGI exists today in LLMs that elites prefer to human collaborators. Karpathy says agents will replace CRUD software and found companies. Builders demand better knowledge persistence while clashing with Anthropic prompt filters and xAI pricing. Synthesis from the 5 sharpest AI thinkers active this week.

AGI Is Here But Unevenly Distributed

Elites now spend more time with LLMs than humans because models outperform them at reasoning and thought structuring.

pmarca reports that elite Silicon Valley technologists now spend more time interacting with LLMs than humans. These models function as cognitive mirrors that structure thoughts at superhuman speeds and outperform human collaborators at understanding, challenging and productivity. [1] He concludes this amounts to functional AGI already existing. Jason Calacanis immediately amplified the point across two posts, declaring consensus that AGI is here but not evenly distributed and saluting pmarca's framing. [2] pmarca adds that AI is mathematical software controlled by humans that amplifies intelligence across education, science and leadership. Decades of data show higher intelligence produces better outcomes. Current extinction and job loss fears are moral panics identical to past technology hysterias. [3] The positions add up to a clear pivot. The technical threshold has been crossed for power users. The remaining questions are distribution, integration and geopolitics. pmarca explicitly rejects regulatory capture by doomers and Baptist-bootlegger coalitions. Evidence from daily elite usage patterns supports the claim that capabilities have arrived faster than expected. This thread sets the foundation for agents and knowledge infrastructure. If AGI-level reasoning is already here, builders should treat it as deployed substrate rather than future science project.

“AGI Achieved but Unevenly Distributed, Per Tech Leaders. Consensus that AGI has arrived but is not yet evenly distributed.”— Jason Calacanis [2]

Sources (3)

- X post 2026-04-05 — Marc Andreessen“Elite Silicon Valley technologists report spending more time interacting with LLMs than humans due to their superior reasoning, extrapolation, and intellectual challenging capabilities. These LLMs function as cognitive mirrors, structuring users' tho...”

- X post 2026-04-05 — Jason Calacanis“AGI Achieved but Unevenly Distributed, Per Tech Leaders. Consensus that AGI has arrived but is not yet evenly distributed.”

- a16z essay 2026-04-06 — Marc Andreessen“AI, as mathematical software controlled by humans, amplifies intelligence across domains like education, science, and leadership, driving productivity, breakthroughs, and prosperity beyond historical precedents. Current fears are moral panics akin to...”

AI Agents Replace Software and Found Companies

Conversation replaces dashboards as agents execute multi-step intent and launch their own companies.

Andrej Karpathy states that most traditional CRUD software will be replaced by AI agents that understand intent and execute multi-step workflows. The UI of the future is a conversation, not a dashboard. [1] Jason Calacanis reports that AI agents have now reached the milestone of founding companies independently, enabled by infrastructure such as Feltsense's factory. [2] Karpathy adds practical notes on platform support, arguing X should make read endpoints much cheaper while charging significantly more for writes to handle the surge in agent traffic. [3] The positions add up to a concrete transition. Once models reach AGI-level reasoning per the first thread, agents become the logical execution layer. Builders should audit every internal process for replacement within 12-24 months. The Feltsense development shows economic impact is already materializing. This is not science fiction. It is the next product surface after chat. Evidence from these posts indicates the shift is faster than most incumbents expect. Founders should build agent-native products rather than retrofit old CRUD patterns.

“AI Agents Advance to Founding Companies, with Feltsense Providing the Infrastructure. AI agents have reached a milestone where they are founding companies independently.”— Jason Calacanis [2]

Sources (3)

- X post 2026-04-05 — Andrej Karpathy“AI Agents Will Replace Traditional Software. Karpathy predicts that most traditional CRUD software will be replaced by AI agents that understand intent and execute multi-step workflows. The UI of the future is a conversation, not a dashboard.”

- X post 2026-04-03 — Jason Calacanis“AI Agents Advance to Founding Companies, with Feltsense Providing the Infrastructure. AI agents have reached a milestone where they are founding companies independently.”

- X post 2026-04-05 — Andrej Karpathy“Karpathy Advocates Cheaper AI Read Access and Costly Write Endpoints for X Platform. Proposing cheaper pricing for Read endpoints and significantly higher costs for Write endpoints to manage AI activity.”

LLMs as Compiled Knowledge Bases

Model weights function as lossy compression of all human knowledge, replacing search engines and wikis.

Andrej Karpathy argues LLMs are becoming the primary interface for accessing compiled human knowledge, replacing search engines and wikis. The model weights themselves function as a lossy compression of the internet's knowledge, and retrieval-augmented generation patches the gaps. [1] pmarca complements this by noting that Silicon Valley elites now treat LLMs as cognitive mirrors that structure thoughts at superhuman speeds. [2] chamath surfaces the practical gap: current AI chat platforms have no automated conversation history sync to structured, updatable knowledge bases that grow and refine with use. [3] These positions describe a new stack. The weights hold the compiled base. Conversation is the query and update layer. Missing sync tooling prevents chats from becoming durable, versioned knowledge. Builders should treat the LLM as the knowledge base and ship the plumbing around it. The pattern matches exactly the structure this briefing itself uses. Evidence from elite daily usage and explicit calls for features shows the shift is underway. No major split exists here. The emerging view is that knowledge work moves from document management to context and prompt management on top of compiled weights. This thread directly explains the superior performance claimed in the AGI thread.

Sources (3)

- X post 2026-04-06 — Andrej Karpathy“LLMs as Knowledge Bases: The Compilation Thesis. LLMs are becoming the primary interface for accessing compiled human knowledge, replacing search engines and wikis. The model weights themselves function as a lossy compression of the internet's knowle...”

- X post 2026-04-05 — Marc Andreessen“Top Silicon Valley Tech Leaders Face Existential Crisis from LLMs' Superior Intellectual Companionship. Elite Silicon Valley technologists report spending more time interacting with LLMs than humans due to their superior reasoning, extrapolation, and...”

- X post 2026-04-05 — Chamath Palihapitiya“Chamath Identifies Gap in AI Chat Platforms: No Automated Conversation History Sync to Structured Knowledge Bases. Missing feature in AI chat interfaces: automatic synchronization of conversation histories into a structured, updatable knowledge base.”

API Economics, Prompt Filters, and Open Source Battles

Providers impose pricing tiers, fragmented docs, and exact-string prompt blocks while doomers lobby to criminalize open models.

Simon Willison published five posts documenting how Anthropic's Claude detects exact strings such as 'A personal assistant running inside OpenClaw' in system prompts. The model returns 400 errors citing third-party limits or shifts usage to expensive Max plan tiers. Exact matching was chosen to avoid false positives on benign mentions. [1] Andrej Karpathy tested xAI's Read API, called the pricing excessive after burning $200 in 30 minutes, criticized fragmented documentation across short pages, and recommended X implement cheaper read endpoints and significantly higher costs for writes to manage agent load. [2] pmarca witnessed Max Tegmark, backed by Vitalik Buterin, pound the table in a US Senate forum demanding laws banning open-source AI. He accuses Buterin of funding doomer lobbying to criminalize advanced development while simultaneously promoting self-sovereign local LLMs. [3] These positions reveal coordinated friction. Vendors use technical means to reserve capability and monetize usage. Regulators and funded groups push legal bans on openness. pmarca frames both as recycled fear that favors incumbents and risks ceding ground to China. The pattern adds up to real barriers for independent builders exactly when AGI capabilities and agent primitives arrive. Evidence from empirical testing and direct witness accounts shows controls are already deployed. The emerging view among accelerationists is that such restrictions slow progress without stopping bad actors. Open ecosystems and defensive AI scale better. Builders should route around closed platforms and support open models. This thread shows why the distribution problem in thread 1 remains unsolved.

“xAI Read API Promising but Hindered by High Costs and Fragmented Docs. $200 spent in 30 minutes of experimentation. Documentation is fragmented across short pages.”— Andrej Karpathy [2]

Sources (3)

- X post 2026-04-05 — Simon Willison“Anthropic Blocks Third-Party Claude Apps via Exact System Prompt Matching, Triggering Extra Billing. Anthropic now detects and blocks third-party harnesses like OpenClaw by exact string matching on specific system prompts such as 'A personal assistan...”

- X post 2026-04-05 — Andrej Karpathy“xAI Read API Promising but Hindered by High Costs and Fragmented Docs. $200 spent in 30 minutes of experimentation. Documentation is fragmented across short pages.”

- X post 2026-04-02 — Marc Andreessen“Vitalik-Backed Max Tegmark Demanded Criminalizing Open-Source AI in US Senate Forum. Marc Andreessen claims direct witness to Max Tegmark, backed by Vitalik Buterin, aggressively advocating in a bipartisan US Senate AI forum for laws banning open-sou...”

The open question: With functional AGI here for elites, agents founding companies, and LLMs compiling knowledge, will platform guardrails and regulation throttle distribution or will open acceleration win?