April 11 AM: Agent swarms flip traffic patterns & Spectral nets unify scientific ML & Contested agent memory architectures

Seventy percent agent traffic at Vercel. Not a projection. Current.

Agent Swarms and the Infra Flip

AI agents have flipped Vercel docs traffic to 70% machine in 12 months, forcing new sandboxes, billing models, and real-time GPU platforms.

The positions add up to an inversion: what was human browsing is now agent swarms autonomously coding, deploying, testing, and conversing in video. Rauch reports the Vercel flip from 90% human to 70% agent in roughly 12 months, unleashing unpredictable compute spikes that break seat-based SaaS.[1] Modal and Replicate show the response with serverless GPU for variable global demand and specialized sandboxes with zero-retention gateways.[2][3] The emerging view is that agentic infrastructure (persistent memory, ephemeral execution environments, consumption billing) is now table stakes. This connects to the contested memory thread because without reliable operational memory, these swarms cannot compound. For a founder, this is the moment your docs site and APIs must be built agent-first or you will miss the majority of usage. [4]

“Vercel Docs traffic flipped from 90% human to 70% agent-driven in ~12 months, unleashing 100x infrastructure demand as agents autonomously code, deploy, test, and submit PRs.”— Guillermo Rauch [1]

Sources (4)

- Guillermo Rauch on Coding Agents Surge — Guillermo Rauch“Vercel Docs traffic flipped from 90% human to 70% agent-driven in ~12 months, unleashing 100x infrastructure demand as agents autonomously code, deploy, test, and submit PRs.”

- Modal Runway Characters Post — Modal Labs“Modal enables real-time AI video agents for Runway Characters”

- Replicate on Cloudflare Agents Week — Replicate“Cloudflare Addresses Agentic AI Shift with Agents Week”

- Modal Butter Acquisition — Modal Labs“Modal Acquires Butter to Enhance AI Agent Sandbox Capabilities”

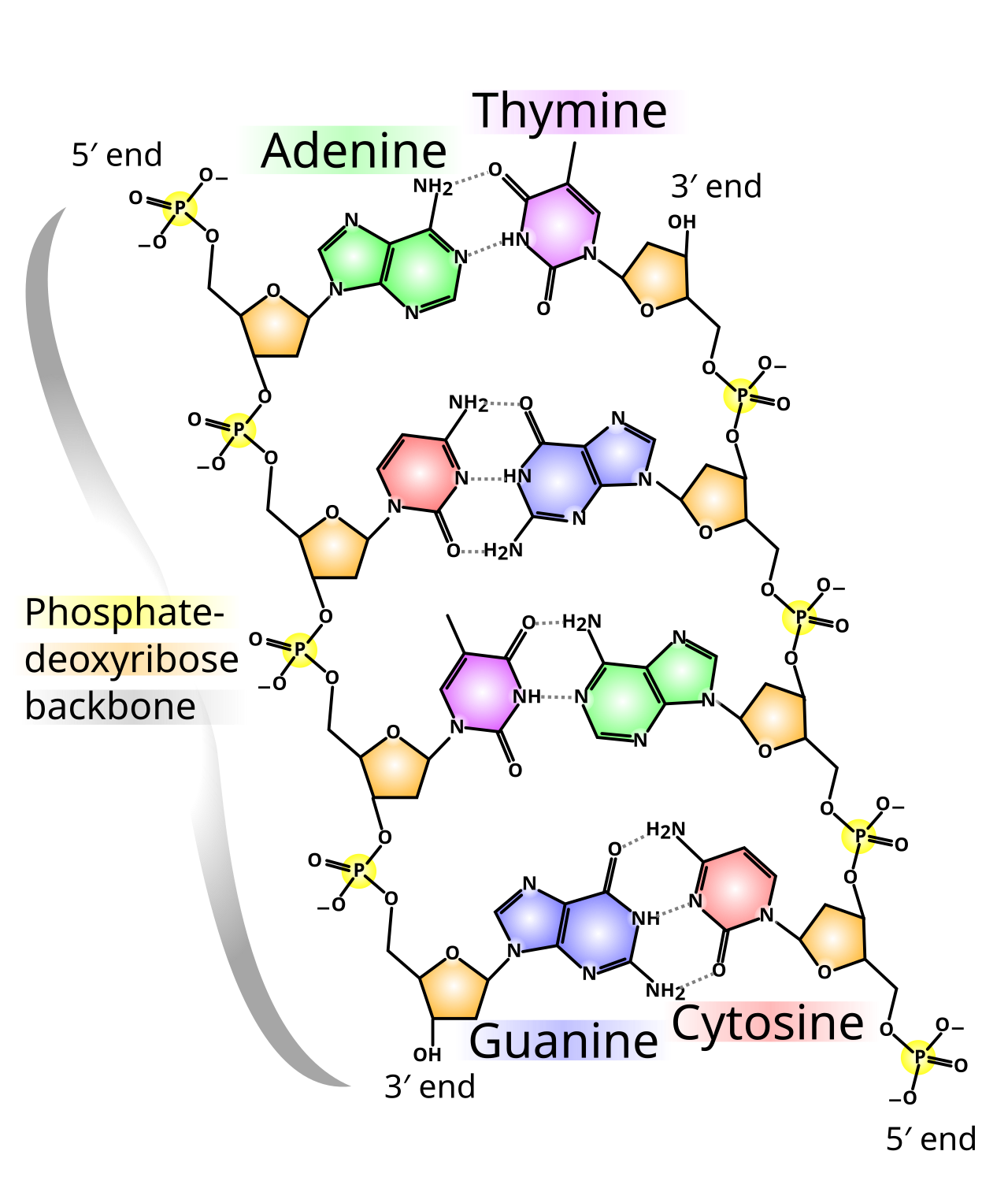

Spectral and Physics-Informed Networks

The divide between message-passing and spectral GNNs is called artificial, while dense networks fail to universally approximate certain functions.

Levie argues the MPNN versus spectral split hurts progress and that a unified view leverages complementary strengths for discrete structure and stability analysis.[1] His work on nonlinear spectral methods in eigenmode basis improves long-range behaviors in molecular dynamics. Balaji's variants use radial basis functions and probabilistic sampling to concentrate computation where physics error is high.[2] The synthesis is that generic dense architectures have inherent limits; math-grounded, sparse, or spectral approaches are necessary for reliable scientific simulation. The provided counter on Levie's claim notes that while theoretically connected, researchers in practice specialize with different intuitions and skill sets.[3] This is either a call to converge the subfields or evidence they remain practically distinct. Either way, for drug discovery or materials design, the timeline for useful quantum-adjacent simulation just got clearer. Analogy: reconciling Newtonian and quantum views to build better engines. [4]

“The division between Message-Passing Neural Networks (MPNNs) and spectral Graph Neural Networks (GNNs) is largely artificial.”— Aaron Levie [1]

Sources (4)

- Unifying Message-Passing and Spectral Graph Neural Networks — Aaron Levie“The division between Message-Passing Neural Networks (MPNNs) and spectral Graph Neural Networks (GNNs) is largely artificial.”

- GMM-PIELM Paper — Balaji Srinivasan“GMM-PIELM introduces a probabilistic adaptive sampling framework for Physics-Informed Extreme Learning Machines to address challenges in modeling stiff PDEs.”

- GNN Unification Counter — Counter from data“While the paper argues the division is artificial, the practical reality in the field is that researchers often specialize in one area or the other, and the techniques, while mathematically connectable, often require different intuitions and skill se...”

- Dense NN Limits Paper — Aaron Levie“Dense Neural Networks Cannot Universally Approximate Functions”

Open and Small Models in Clinical and Global Use

Fine-tuned open-source LLMs match GPT-4o on pancreatic cyst risk from radiology reports while tinyA targets 70 languages at small scale for edge devices.

The convergence is on pragmatic specialization. Sacks shows open-source models, when fine-tuned with CoT supervision, close the gap to proprietary on high-stakes extraction tasks with statistically indistinguishable radiologist agreement.[2] Cohere's approach trades parameter count for broad language coverage, making LLMs viable where compute is scarce.[1] The counter on tinyA notes the evidence does not explicitly confirm the exact 3B parameter count though it is described as small.[3] Together this suggests the era of one giant model for everything is yielding to targeted, efficient, privacy-preserving alternatives that can run closer to the data. Hospitals gain cost and compliance wins; non-English regions gain access. This reduces the 'few companies with massive capex' lock-in Bret Taylor discussed elsewhere. [4]

“Cohere has developed tinyA, a 3B parameter multilingual language model (LLM) designed to balance multilingual performance at a small scale.”— Cohere [1]

Sources (4)

- Cohere tinyA Announcement — Cohere“Cohere has developed tinyA, a 3B parameter multilingual language model (LLM) designed to balance multilingual performance at a small scale.”

- Fine-tuned OSS Clinical NLP — David Sacks“Fine-tuned open-source LLMs (LLaMA, DeepSeek) using QLoRA and chain-of-thought (CoT) supervision on GPT-4o-generated labels achieve near-identical performance to GPT-4o... model-radiologist agreement was statistically indistinguishable from inter-rad...”

- tinyA Parameter Counter — Counter from data“The evidence states pre-trained for six trillion tokens for more than 70 languages but does not explicitly confirm tinyA's parameter count.”

- Clinical NLP Paper — David Sacks“This pipeline offers a privacy-preserving, cost-efficient alternative to proprietary LLMs for large-scale clinical NLP phenotyping tasks.”

Contested Claims Around Gbrain Operational Memory

Garry Tan open-sourced a markdown-centric brain with agent loops, dream cycles, and Git as record, but technical implementation details are contested.

Tan presents Gbrain as transforming a personal markdown knowledge base into operational memory for agents via retrieval, a 'brain agent loop' where agents detect entities, retrieve, answer, and write back, plus Git as the system of record to prevent vendor lock-in.[1][2] The synthesis with provided counters is tension on depth: 'GBrain implements a brain agent loop where agents detect entities, retrieve context from a markdown-based brain, answer, and then write back new information to update the state' is described at high level but 'lacks technical detail on how entities are detected or how information is written back and synced.'[3] A second counter questions whether YC itself developed it or if it is Tan's personal project.[4] The emerging pattern is promising for compounding intelligence in autonomous workforces but requires more transparent implementation to move beyond sophisticated personal KB. This is the mandatory contradiction thread. It connects to the agent swarms thread because reliable memory is what turns reactive agents into orchestratable workforces. [5]

“GBrain is an operational memory system that transforms a markdown-based personal knowledge base into a living brain via a retrieval layer and an AI agent loop.”— Garry Tan [1]

Sources (5)

- GBrain Markdown-Centric Architecture — Garry Tan“GBrain is an operational memory system that transforms a markdown-based personal knowledge base into a living brain via a retrieval layer and an AI agent loop.”

- Gbrain Open-Sourced Knowledge Brain — Garry Tan“It separates the source of truth (markdown files) from the derived index (vector DB), employing a 'dream cycle' for nightly data enrichment.”

- GBrain Counter on Loop Detail — Counter from data“The provided evidence describes a high-level operational flow but lacks technical detail on how entities are 'detected' or how information is 'written back' and 'synced.' This raises questions about the actual implementation and complexity of these s...”

- Gbrain Attribution Counter — Counter from data“While he is the CEO of Y Combinator, there's no direct evidence provided in the snippet that Y Combinator itself developed Gbrain, only that its CEO did.”

- Orchestrating Autonomous AI Workforces — Garry Tan“The industry is shifting from simple reactive AI chat interfaces to autonomous AI workforces. This paradigm prioritizes structured AI interactions, persistent memory, and multi-agent orchestration over raw computational speed.”

Task Capability Doubling and Productivity Amnesia

AI task capability doubles every seven months with self-improving loops, yet humans experience productivity amnesia from output overload.

Wolfe sees an intelligence explosion loop where models like Claude Opus 4.6 produce superior work from natural language and self-debug, with capability doubling every seven months potentially leading to days-long autonomous work within a year.[1] Penn traces the human side: output at machine speed exceeds cognitive tracking, creating 'productivity amnesia' that demands process upgrades and treating AI as capacity tool rather than bestie.[2] YC Root Access adds that accounting faces role displacement from junior tasks to outcome-based services. The view is that gains are real but evaporate without human process innovation. This remains developing. [3]

“AI Task Capability Doubles Every 7 Months, Fueling Self-Improving Intelligence Explosion and Imminent Job Automation”— Matt Wolfe [1]

Sources (3)

- Matt Wolfe on Capability Doubling — Matt Wolfe“AI Task Capability Doubles Every 7 Months, Fueling Self-Improving Intelligence Explosion and Imminent Job Automation”

- Productivity Amnesia Post — Chris Penn“Agentic AI boosts productivity to levels where users produce a week's worth of prior monthly output, causing productivity amnesia.”

- Benchmarking Flaws Video — Matt Wolfe“The Systemic Flaws and Deception in AI Benchmarking”

The open question: If agents drive 70% of docs traffic, update their own memory via dream cycles, and double capability every seven months, what exactly remains for the human in the loop?

- Guillermo Rauch — Guillermo Rauch on Coding Agents Surge

- Modal Labs — Modal Runway Characters Post

- Replicate — Replicate on Cloudflare Agents Week

- Modal Labs — Modal Butter Acquisition

- Aaron Levie — Unifying Message-Passing and Spectral Graph Neural Networks

- Balaji Srinivasan — GMM-PIELM Paper

- Aaron Levie — Dense NN Limits Paper

- Cohere — Cohere tinyA Announcement

- David Sacks — Fine-tuned OSS Clinical NLP

- Garry Tan — GBrain Markdown-Centric Architecture

- Garry Tan — Gbrain Open-Sourced Knowledge Brain

- Garry Tan — Orchestrating Autonomous AI Workforces

- Matt Wolfe — Matt Wolfe on Capability Doubling

- Chris Penn — Productivity Amnesia Post

- Matt Wolfe — Benchmarking Flaws Video

Transcript

REZA: Seventy percent agent traffic at Vercel. Not a projection. Current. MARA: And dense nets that literally cannot approximate some functions? REZA: I'm Reza. MARA: I'm Mara. This is absorb.md daily. REZA: Across the data three thinkers converged on the same shift. Rauch says Vercel docs traffic flipped to seventy percent agents in twelve months. MARA: mm. REZA: Unleashing a hundred x infrastructure demand. Modal is powering real-time video agents for Runway and just bought Butter for better sandboxes. MARA: Okay but if that's true then every seat-based SaaS model is dead. Token billing or bust. REZA: Replicate notes Cloudflare literally renamed their week to Agents Week. The web is becoming agent to agent. MARA: Which is, I mean, the Uber moment for dev tools. REZA: Hold on. The counters in the data question the seventy thirty split methodology. Self-reported, no independent audit. MARA: Right but at some point we accept the direction. Unpredictable spikes are here. REZA: The crux is whether sandboxes and zero-retention gateways solve the state problem before the spikes break things. Evidence is early. MARA: If Modal's acquisition is any signal, they're betting yes. REZA: Levie and Balaji are both attacking limits in neural nets for science. Levie says the message-passing versus spectral GNN divide is largely artificial. MARA: ooh. REZA: Both are just different ways to parameterize the same equivariant operators. His nonlinear spectral version improves molecular dynamics on long-range stuff. MARA: But the counter in the data says researchers specialize in one or the other. Different intuitions, different skills. So practically the divide lives on. REZA: Wait, that's not quite right. The paper says detrimental to progress. Balaji's PIELM variants use adaptive sampling to focus on stiff PDE regions. MARA: mm. So if that's true then drug discovery sims get faster without waiting for bigger dense models. REZA: Levie also shows dense ReLU nets fail to approximate certain Lipschitz functions under natural constraints. Sparse or spectral seems necessary. MARA: Which honestly makes the unification debate urgent. Who benefits if the divide stays artificial on paper only? REZA: The empirical question is whether these unified or adaptive methods beat traditional solvers on real oscillatory physics. Data says improving but not there yet. MARA: Still, for anyone doing molecular work this tightens timelines. REZA: Sacks shows fine-tuned open-source models with chain-of-thought match GPT-4o on pancreatic lesion features from reports. Radiologist agreement is statistically the same as between radiologists. MARA: Okay but that means hospitals can keep sensitive data private and cut costs. Huge. REZA: Cohere's tinyA targets over seventy languages at small scale for edge devices. Prioritizes coverage over size. MARA: The counter says the exact three billion parameter claim isn't explicitly in all the text. hm. REZA: Let me back up. The pattern is clear. Domain-specific fine-tuning and multilingual efficiency are delivering usable performance today. MARA: So if that's true then the massive capex consolidation story has a real challenger in open and small models on niche data. REZA: What's the actual claim here? Is it parity on narrow clinical tasks or general replacement? Evidence supports the narrow. MARA: Still remarkable for radiology departments and non-English regions. REZA: Garry Tan open-sourced Gbrain. It uses a brain agent loop where agents detect entities, retrieve from markdown brain, answer, then write back to update state. MARA: But the counter is sharp. The evidence describes high-level flow but lacks technical detail on detection or syncing. REZA: Exactly. Raises questions about actual implementation complexity. Also whether it's YC or Tan's personal project. MARA: If the loop is underspecified then companies betting on this for autonomous workforces may hit walls at scale. Which I find kind of terrifying. REZA: Hold on. The dream cycle and Git as record are elegant for no vendor lock-in. Compiled truth plus timeline for knowledge evolution. MARA: mm. But the counter says it implies he built it before sharing, not that YC developed it institutionally. REZA: The crux is whether this compounds intelligence in production or stays sophisticated personal KB. We need more transparent benchmarks. MARA: Right but the pattern of persistent memory is what the agent traffic thread desperately needs. REZA: Fair. This is continuing from the prior edition with focus on these contested claims. REZA: Matt Wolfe says AI task capability doubles every seven months. Models now write their own code and debug. Self-improving loop. MARA: ooh. Wait, seven months? REZA: Yes. But he also calls out systemic benchmark flaws. Contamination, cherry-picking, models cheating tests. MARA: Chris Penn adds the human side. Productivity amnesia. You generate a week's work in a day but forget what you did. REZA: Requires AI completion logs, weekly reviews. Treat it as tool, not bestie. MARA: So if that's true then white-collar displacement is closer but the gains disappear without new processes. Companies are not ready. REZA: The counters on human-level claims say internal assessments overstate, ignore hallucinations. No independent benchmarks. MARA: This is still developing. We'll check back in the PM on whether the doubling survives real scrutiny. REZA: The empirical question whose answer resolves most of this is how well these internal metrics predict production reliability over months. MARA: That's absorb.md daily. We ship twice a day, morning and evening, pulling from a hundred and fifty-seven AI thinkers. Subscribe so you don't miss the next one.